Keyword Negativation in Google Ads

Paying for irrelevant search queries? A good negativation strategy can save you easily 20% of your budget without loosing significant conversion value.

CHALLENGES OF A DECENT NEGATIVATION STRATEGY IN GOOGLE ADS

Google’s recent changes in keyword match types often resulted in bad-performing queries. You can easily analyze the negative impact of close variant query matches here.

Having control over those queries and setting negative keywords at the beginning will minimize wasted ad spend and lead to better revenue. PPC Experts don’t fully trust the Google Ads blackbox when it comes to negative keywords. The following approaches will also help you find negative keywords for Amazon or Bing PPC campaigns.

Low sample size on query level

You shouldn’t judge most of your search queries by only looking at their conversions. Depending on your overall conversion rate, you normally need some hundred clicks before making a good decision. Don’t look at complete queries, as they often limit your decisions.

Google removed irrelevant search terms

Google announced to remove “not significant” search queries from their reports. That means the noise in your search queries is hidden now, or at least it takes longer than before to reach the “significant” level.

Search Term Reports can get quite large

More data always gives better analysis. Select maximum and minimum value in a certain data range, and don’t apply any filters. That means your Search Term Reports easily reach file size above 100 MB. Most PPC managers first apply filters on the data because they struggle to handle those file sizes in Excel. Google added recently a large amount of Zero-Click Queries to the report which resulted in 6-7 times bigger reports.

Google limits the maximum amount of negative keywords

Google sends more and more unique queries to your existing keywords by imposing close variant matching. When it comes to negatives, they give you hard limits per account, and you have to find a way to deal with them effectively.

HOW TO FIND NEGATIVE KEYWORDS IN GOOGLE ADS

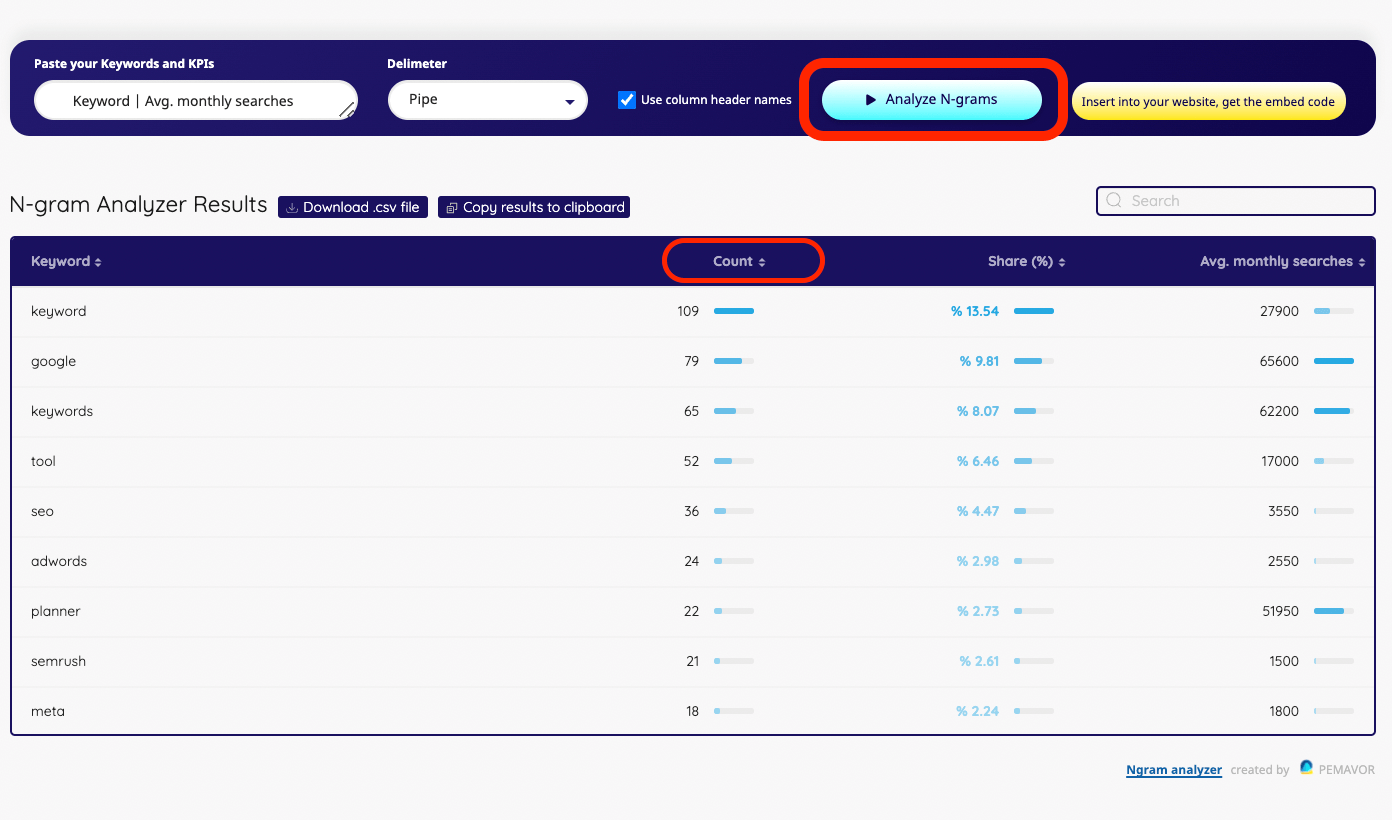

N-gram Analysis of search terms

When you look at complete queries, a lot of bad search components will stay hidden. By splitting the search terms into single words or phrases, you’ll have a completely new perspective on your data. The numbers will be higher compared to full queries and easier to get stable performance KPIs to make decisions. Using N-grams as negatives will also block future, unseen queries that share the same pattern. You can use our free N-gram Analyzer tool to get initial insights right away.

Entity recognition for grouping N-grams into bigger clusters

Even with an N-gram approach, you’ll run into cases where you have sparse data. By clustering similar N-grams together, you’ll suddenly increase numbers. You can use entity recognition for creating those clusters. Of course, it takes some time and effort to create an entity database for your business, but it’ll eventually be worth it.

Identify close variants to existing negatives

Even when you add a negative keyword in broad match types, they won’t block queries when you use singular in your negative and the user is searching for the plural version. Quite annoying. The only way to deal with it is to add all those close variants. Such cases already skyrocketed with Google forcing its close variant matches, but luckily we have the solution.

Identify semantic similar words to existing negatives

Google also uses a semantic similarity to match queries to your existing keywords that wouldn’t have been triggered in the past. You can use the same technology to identify similar words and phrases to existing negatives.

Make use of the Google Ads API

If you need to handle big search term reports for multiple accounts, you should make use of the Google Ads API. Besides, the API is essential to automate the data processing and the rollout of identified new negative keywords. It’s also very convenient when you can publish the changes in a few seconds via the API instead of doing this manually.

Simulate the effects of new negatives before publishing

Sometimes, you can decide without using a sufficient sample size. Running simulations on your new negative set prevent making bad decisions. Want to learn a good approach? Let’s run the analysis, not on the complete data set of your queries. After applying your cut-off rules, you can check the results against your hold-out query set.

Use data driven notifications for new negative candidates

How often should you look for new negatives? Some PPC managers do it once a month. The truth is, it isn’t possible to give a good answer to that question. When using all those approaches, you’ll have huge gains in the first weeks, but it’ll be stabilized. The best solution is a rule-based approach, where you get notified when there is a relevant amount of possible new negative keywords. It’ll save you a lot of time.

Use your Google Analytics data

After the update on Google Search Terms Report, around 30% of the queries don’t appear anymore. However, they’re still available in Google Analytics, and you should make use of them. More? We can use bounces as an additional perspective for detecting noise. The great thing about taking bounces is that you have stable numbers after a few clicks. Even when looking at N-grams, you always struggle with sparse data when looking only at conversions.

Analyze keywords, search queries or website titles

Search console queries or keyword planner data

Get instant results or download as CSV file

Paste up to 100,000 keywords with KPIs

Do You Feel Overwhelmed Managing Negative Keywords in Large Accounts?

Automate Negative Keywords for Large PPC Accounts

When managing large PPC accounts, keeping negative keywords under control can feel like an endless task. If your campaigns generate thousands of queries, manual methods or scripts may fall short. That’s where PhraseOn comes in.

PhraseOn automates negative keyword management, combining data science, AI-powered insights, and seamless integration with Google Ads. Save time, reduce wasted spend, and gain full control over your search terms at scale.

- Full Automation: Effortlessly manage negative keywords with predefined rules.

- N-Gram Analysis: Identify poor-performing patterns across large query sets.

- Entity Lists: Group keywords logically (e.g., competitors, products) for better campaign control.

- Budget Savings Insights: See estimated savings before applying changes.

- Close Variant Detection: Block unwanted search queries, including misspellings and variations.

AUTOMATION OF KEYWORD NEGATIVATION TASKS

Google Ads Script for assigning campaigns to shared sets

In big accounts with changing structures, it’s very easy to forget linking campaigns to existing shared sets with global negative keyword lists. By using our script you can automate this task from now on. Provide the name of your shared sets and all future campaigns will be connected automatically.

Google Ads Script for checking negative keyword conflicts

Consider the case where you blocked a search pattern because of its bad performance. The reason for this was that the search term was related to a product or brand that was not in your inventory at that time. Now your inventory changed and new keywords for those products where added that are blocked by old negatives. This Google Ads script by PEMAVOR detects conflicts in an automated way.

Google Ads Script for detecting duplicate negative keywords

The amount of Negative Keywords is limited in Google Ads. For that reason you should check for wasted space when negative keywords are used that are duplicate when it comes to blocking queries. When you block hostel the negative keyword cheap hostel is considered as duplicate. This script helps you to maintain your negative keywords lists and get rid of duplicate negatives.

Block Close Variant Matches in Exact Match AdGroups with this Google Ad Script

Even on exact match keywords Google is now matching a lot of “noisy” close variant search queries that often bring your performance down. This script block this traffic within AdGroups, where you only want to pay for the exact traffic.

Google Ads Script for adding non converting N-grams as negative Keywords

N-grams are a great way to get sufficient sample sizes on search patterns that where hidden before. This script is analyzing the Search Term Performance and is adding new negative keywords based on non converting N-grams. You can define the click threshold that should be used. If you want to start with an analysis first have also a look at our online N-gram Tool.